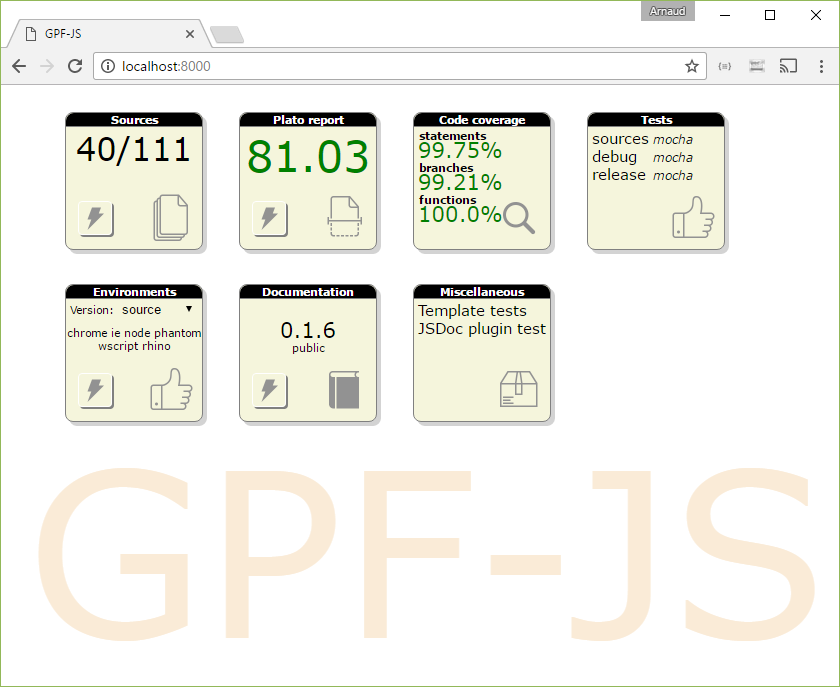

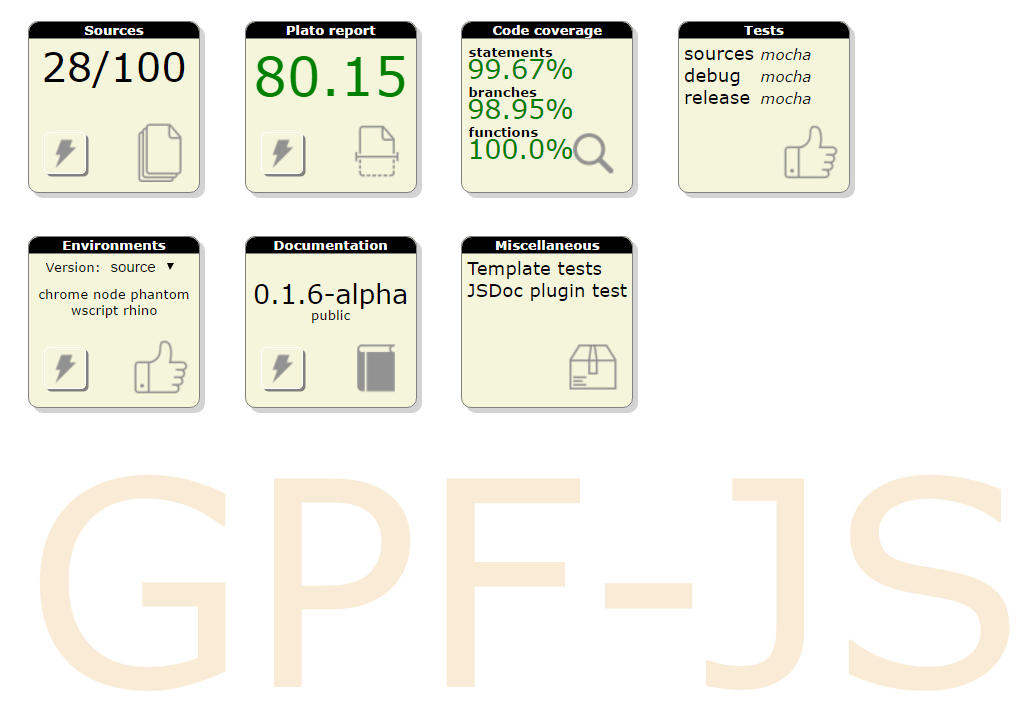

Release 0.1.6 of GPF-JS delivers a basic class definition mechanism.

Working on the release 0.1.7, the focus is to improve this implementation by providing mechanism that mimic

the ES6 class definition. In particular, the super keyword is replaced with a $super member that provides

the same level of functionalities. Here is how.

Release 0.1.6 of GPF-JS delivers a basic class definition mechanism.

Working on the release 0.1.7, the focus is to improve this implementation by providing mechanism that mimic

the ES6 class definition. In particular, the super keyword is replaced with a $super member that provides

the same level of functionalities. Here is how.

Introduction

The super keyword was introduced with ECMAScript 2015. Its goal is to simplify the access to parent methods of an object. It can be used within a class definition or directly in object literals. We will focus on class definition.

Class examples

To demonstrate the usage, let define a simple class A. class A {

constructor (value = "a") {

this._a = true;

this._value = value;

}

getValue () {

return this._value;

}

}

In that example, the class A offers a constructor with an optional parameter (defaulted to "a"). Upon execution, it sets the member _a to true (this will be used later to validate the constructor call). Also, the member _value receives the value of the parameter. Finally, the method getValue exposes _value.

Then, let subclass it with class B. class B extends A {

constructor () {

super("b");

this._b = true;

}

getValue () {

return super.getValue().toUpperCase();

}

}

When instances of B are built, the constructor of A is explicitly called with the parameter "b". Also, the behavior of the method getValue is modified to uppercase the result of parent implementation.

None of these features are new to JavaScript. Indeed, the exact same definition can be achieved without any of the ECMAScript 2015 keywords.

For instance:

function A (value) {

this._a = true;

this._value = value || "a";

}

Object.assign(A.prototype, {

getValue: function () {

return this._value;

}

});

function B () {

A.call(this, "b");

this._b = true;

}

B.prototype = Object.create(A.prototype);

Object.assign(B.prototype, {

getValue: function () {

return A.prototype.getValue.call(this).toUpperCase();

}

});

There are several ways to implement inheritance in JavaScript. In this example, the pattern used in GPF-JS is demonstrated.

Differences

Whether you use one syntax or the other, both versions of A and B will look (and behave) the same:

- A and B are functions

- A.prototype has a method named getValue

- b instances only have own properties _a, _b and _value

- b instanceof A works

Class version (Chrome & Firefox only)

So, why would you use the super keyword?

As you may see in the examples, accessing the parent methods without super is possible but requires the knowledge of the parent class being extended. Furthermore, the syntax is not easy to remember... Well, after using it a thousand times, you end knowing it by heart.

- In the constructor,

super("b")is replaced withA.call(this, "b")

- In a method,

super.getValue()is replaced withA.prototype.getValue.call(this)

As a consequence, any update in the class hierarchy would lead to a mass search & replace in the code.

Beside this, one could say that this keyword is a typical example of syntaxic sugar as it does not bring new feature.

if you forget about object literals...

Exploring the feature

Even if the documentation on super is extensive, some questions remains about the way it reacts to edge cases.

Redefining parent method

What happens if the parent prototype is modified? Does it call the modified method or does it call the method that was existing when the child method is defined.

The link is dynamic.

Example (Chrome & Firefox only)

This is consistent with the function implementation: A.prototype.getValue re-evaluates the member every time it is called.

Getting function object

Is it possible to access the parent method without invoking it? Does it return a function object?

It returns the parent function object.

Example (Chrome & Firefox only)

It is important to notice that if not invoked immediately (look at getSuperGetValue in the example), the value of this is undefined.

Checking parent method existence

Finally, how does the super keyword validate the method that is accessed: what happens if you try to reference a non-existing member: does it fail when generating the class or upon method execution?

Accessing a non-existing member returns undefined.

Example (Chrome & Firefox only)

This is also consistent with the function implementation: it makes sense that the error is thrown at evaluation time.

A super idea

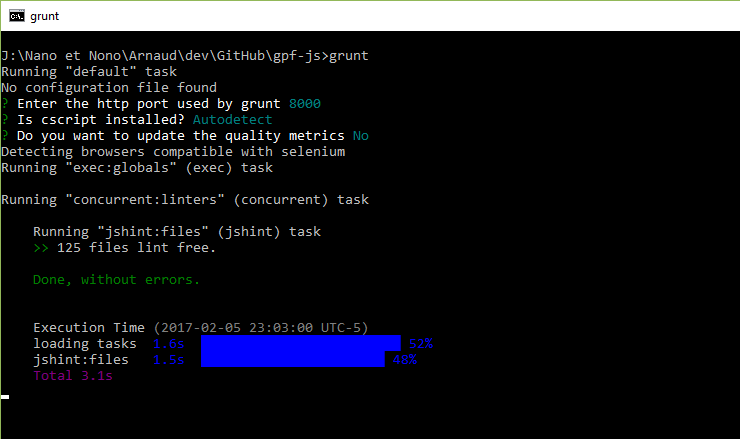

One of the goals of GPF-JS is to provide the same feature set whatever the host running the script. Because some of them are old (Rhino and WScript), it is not only impossible to use recent features but also it prevents the use of transpilers.

Transpilers like babel are capable of generating compatible JavaScript code out of next-gen JavaScript source.

gpf.define is a class definition helper exposed by the library since version 0.1.6. But it would not be complete without a mechanism that mimics the super keyword in order to reduce the complexity of calling parent methods.

super being a reserved keyword, it could not be used. But as the library reserves $ properties for specific usage, the idea of defining $super naturally came up.

In order to make the $super keyword a global one (like super), the library had to tweak the global context object which generated lots of issues (leaks detected in mocha, validation errors in linters, the variable could already be defined by the developer...). So, $super had to be attached to the context of the class instance.

this.$super was defined and had to support two different syntaxes:

- Calling

this.$supermust be equivalent tosuper

- Calling

this.$super.methodNamemust be equivalent tosuper.methodName

Class definition

The library internally uses an object to retain the initial definition dictionary, parse it and build the class handler. This class definition object is not yet exposed but will be in the future through a read-only interface.

This object is a key component of this implementation as it keeps track of the class properties such as the extended base class. This will be leveraged to access parent methods.

Object.getPrototypeOf could be used to escalate the prototype chain and retrieve the base methods. However, it is poorly polyfilled on old hosts and it does not work as expected with standard objects.

Wrapping methods

In order to be able to cope with this.$super calls inside a method, the library has to make sure that the $super member exists before executing the method.

A long time ago, when studying JavaScript inheritance, I found this very interesting article from John Resig (the creator of jQuery).

It took me ages to fully understand its Class.extend helper but it demonstrates a brilliant JavaScript ninja technique: by testing the method with a regular expression, it is capable of finding out if a class method uses the _super keyword. If so, the method is wrapped inside a container function that defines the _super member for the lifetime of the call.

// Check if we’re overwriting an existing function

prototype[name] = typeof prop[name] == "function" &&

typeof _super[name] == "function" && fnTest.test(prop[name]) ?

(function(name, fn){

return function() {

var tmp = this._super;

// Add a new ._super() method that is the same method

// but on the super-class

this._super = _super[name];

// The method only need to be bound temporarily, so we

// remove it when we’re done executing

var ret = fn.apply(this, arguments);

this._super = tmp;

return ret;

};

})(name, prop[name])

: prop[name];

Typically, GPF-JS uses the same strategy to detect the use of $super and wrap the method in a new one that defines the value of this.$super upon execution.

The use of _gpfFunctionDescribe and _gpfFunctionBuild ensures that the signature of the final method will be the same as the initial one. Indeed, GPF-JS will soon enable interfaces validation and signatures of methods have to match.

Dynamic mapping of super method

So, when the class is being defined, a dictionary mapping method names to their implementation is passed to gpf.define. This definition dictionary is enumerated so that when the $super use is detected in a method, the name of the parent method is deduced.

This name (as well as members of $super, it will be explained right after) is remembered in a closure and passed to the function _get$Super before calling the method.

Building a new $super method object

The class definition method _get$Super creates a new function instead of reusing the parent one. The reason is quite simple: JavaScript functions being objects, it is allowed to add properties to them... and this will be required to define expected additional super method names.

But then you may wonder why the parent function object is not used? those additional member names could be backed up, overwritten and restored once the call is completed. In the end, it would allow the child method to use parent one members.

However, there are several considerations here:

- This object could be frozen with Object.freeze meaning it would be read-only.

- This function object could be used elsewhere meaning that the modification could be visible outside of the method.

One may argue that this is also true for this.$super. However, detection of this member makes it to be overwritten.

While writing this above comment, I realized that the current implementation has an issue. If you ever wonder why I wrote this article, this is a good reason.

- Even if super returns the parent method object, it would be extremely confusing to have members that are being used. Consider the following example: super.getValue, how do you know if it is a parent method named getValue or the member getValue of the parent method?

I suspect this is the reason why super() is supported only in constructor functions. Try using super() in a class method, you will get an error "SyntaxError: 'super' keyword unexpected here". this.$super overcomes this limitation.

- If the developer expects to get members on the parent method, he would have a hard time defining them and reusing them (not mentioning the code complexity). This encapsulation prevents this bad practice and avoids headaches.

Detecting $super members

Once $super is detected in a method content, the list of $super members is extracted using a regular expression.

This detection part is critical as it greatly improves performances by generating only what is required.

Then, for each extracted name, the member is created inside _get$Super right after allocating $super.

Invoking super methods

When calling this.$super(), the method $super would obviously receive the proper context.

However, things are more complicated when calling this.$super.methodName().

If you understand how JavaScript function invocation works, you know that inside methodName, this would be equal to this.$super.

And that is a function object.

So how can the library make sure that the proper context is transmitted to methodName?

Function binding could be used to force the value of this but then we would lose the possibility to invoke it with any context.

Function binding

Before Function.prototype.bind was introduced, people used to create a closure to force the value of this inside a function.

Function.prototype.bind = function(oThis) {

var fToBind = this;

return function () {

return fToBind.apply(oThis, arguments);

}

};

This concept was also made popular with jQuery.proxy.

The drawback is that, once a function is bound, it is no more possible to change the context it is executed with.

Demonstration function getValue() {

return this.value;

}

// Passing the context

log(getValue.call({

value: "Hello World"

})); // output "Hello World"

// Binding

var boundGetValue = getValue.bind({

value: "Bound"

});

log(boundGetValue()); // output "Bound"

// Trying to pass a different context

log(boundGetValue.call({

value: "Hello World"

})); // output "Bound"

// Trying to bind again

var reboundGetValue = boundGetValue.bind({

value: "Hello World"

});

log(reboundGetValue()); // output "Bound"

Back to the $super.methodName example, it requires a sort of weak bind: a method binding that could be overwritten with a different context using bind, call or apply.

Weak binding

$super being known when the methods are created, it can be compared with the value of this and substituted when matched.

This realizes the weak binding and allows the developer to bind, call or apply the method without any problem.

Conclusion

There is no revolution in this article and many will consider this realization useless as they focus on modern environment and they use latest JavaScript features. However, my curiosity is satisfied as I learned a lot about the super feature. Moreover, the library will soon deliver new features on top of this one that should make the difference.

This new release delivers the initial class mechanism as well as minor improvements.

This new release delivers the initial class mechanism as well as minor improvements.